Log Management & Analysis

Centralize all your logs in one place. Powerful search, real-time analysis, and intelligent alerting help you troubleshoot faster and maintain system health.

Complete Log Management

Universal Log Ingestion

Collect logs from any source: servers, containers, applications, cloud services, and network devices.

Automatic Parsing

Intelligent parsing extracts structured data from unstructured logs automatically.

Powerful Search

Full-text search with regex support. Find any log entry in milliseconds across billions of records.

Advanced Filtering

Filter by time range, severity, source, or any custom field. Save filters for quick access.

Log-Based Alerting

Set alerts on log patterns, error rates, or any condition. Get notified before issues escalate.

Metrics Integration

Correlate logs with infrastructure metrics for complete observability and faster troubleshooting.

Log Collection & Processing

Ingestion Sources

- Syslog (RFC3164, RFC5424)

- Application logs (stdout, stderr)

- Container logs (Docker, Kubernetes)

- Cloud logs (AWS CloudWatch, Azure Monitor)

- Web server logs (Apache, Nginx)

- Custom log files

Parsing & Structuring

- JSON parsing

- Regex pattern matching

- Grok patterns

- Custom parsers

- Field extraction

- Data enrichment

Search & Analysis

- Full-text search

- Regex queries

- Time-based filtering

- Field-level search

- Aggregations

- Log analytics

Retention & Storage

- Flexible retention policies

- Automatic archiving

- Compression

- Hot/cold storage

- Data export

- Compliance-ready

Intelligent Log Alerting

🔍 Pattern Matching

Alert on specific log patterns, error messages, or suspicious activity. Use regex for complex matching.

📊 Threshold Alerts

Get notified when error rates, request counts, or any log metric exceeds defined thresholds.

⚡ Real-Time Detection

Process logs in real-time and trigger alerts within seconds of critical events.

🎯 Smart Grouping

Automatically group related log alerts to reduce noise and provide better context.

Unified Observability

Metrics Correlation

View logs and metrics side-by-side. Jump from a metric spike to related logs instantly.

Security Monitoring

Track authentication failures, access patterns, and security events across your infrastructure.

Metrics Correlation

Connect logs with infrastructure metrics for complete request-to-response visibility.

Dashboard Integration

Add log widgets to your dashboards. Monitor log volume, error rates, and trends visually.

Why Centralized Log Management?

Faster Troubleshooting

Find the root cause of issues in seconds, not hours. Search across all your logs from one interface.

Proactive Detection

Catch errors and anomalies before they impact users. Alert on patterns that indicate problems.

Security & Compliance

Maintain audit trails, track access, and meet compliance requirements with centralized log storage.

Better Insights

Analyze trends, understand user behavior, and optimize performance with comprehensive log data.

What Is Centralized Log Management?

Centralized log management is the practice of aggregating logs from all your servers, applications, containers, and network devices into a single searchable platform. Instead of SSH-ing into individual machines and tailing log files, teams can search, filter, and analyze logs from their entire infrastructure in one place.

As infrastructure grows, scattered logs become a critical problem. An error that spans multiple services — a failed API call that triggers a retry storm, a database timeout that cascades to the frontend — leaves traces across dozens of log files on different hosts. Without centralization, reconstructing the event timeline means manually correlating timestamps across systems, a process that turns a 10-minute diagnosis into a multi-hour investigation.

Centralized logging also addresses compliance and audit requirements. Regulations like GDPR, HIPAA, SOC 2, and PCI-DSS often require that logs be retained for specific periods, protected from tampering, and available for review upon request. A centralized system with configurable retention policies, access controls, and immutable storage meets these requirements far more reliably than individual host-level log files that can be rotated, overwritten, or lost when a server is decommissioned.

How the Bleemeo Log Pipeline Works

Collection

The Glouton agent collects logs from multiple sources simultaneously: local log files (any path or glob pattern), syslog (RFC3164 and RFC5424), container stdout/stderr (Docker, containerd, Kubernetes pods), and application output. In Kubernetes, Glouton discovers and collects logs from all pods automatically. You can also send logs directly via OTLP over gRPC or HTTP from your applications.

Processing & Parsing

Logs pass through an OpenTelemetry Collector-based processing pipeline. Glouton supports JSON parsing, regex patterns, Grok patterns, and custom Stanza operators. Known log formats (nginx_access, apache_combined, syslog) are parsed automatically. The pipeline extracts timestamps, severity levels, and structured fields, transforming raw text into queryable data.

Filtering & Enrichment

Before transmission, logs can be filtered using OpenTelemetry Transformation Language expressions, regex patterns, severity levels, or resource attributes. You can exclude noisy health-check logs, drop debug-level messages in production, or include only specific containers. Logs are enriched with hostname, service name, and container metadata automatically.

Compressed Transmission

Processed logs are batched and compressed before being sent to Bleemeo Cloud. This minimizes network bandwidth usage and ensures reliable delivery even on constrained connections. The agent handles retries and backpressure gracefully, buffering logs locally if the connection is temporarily unavailable.

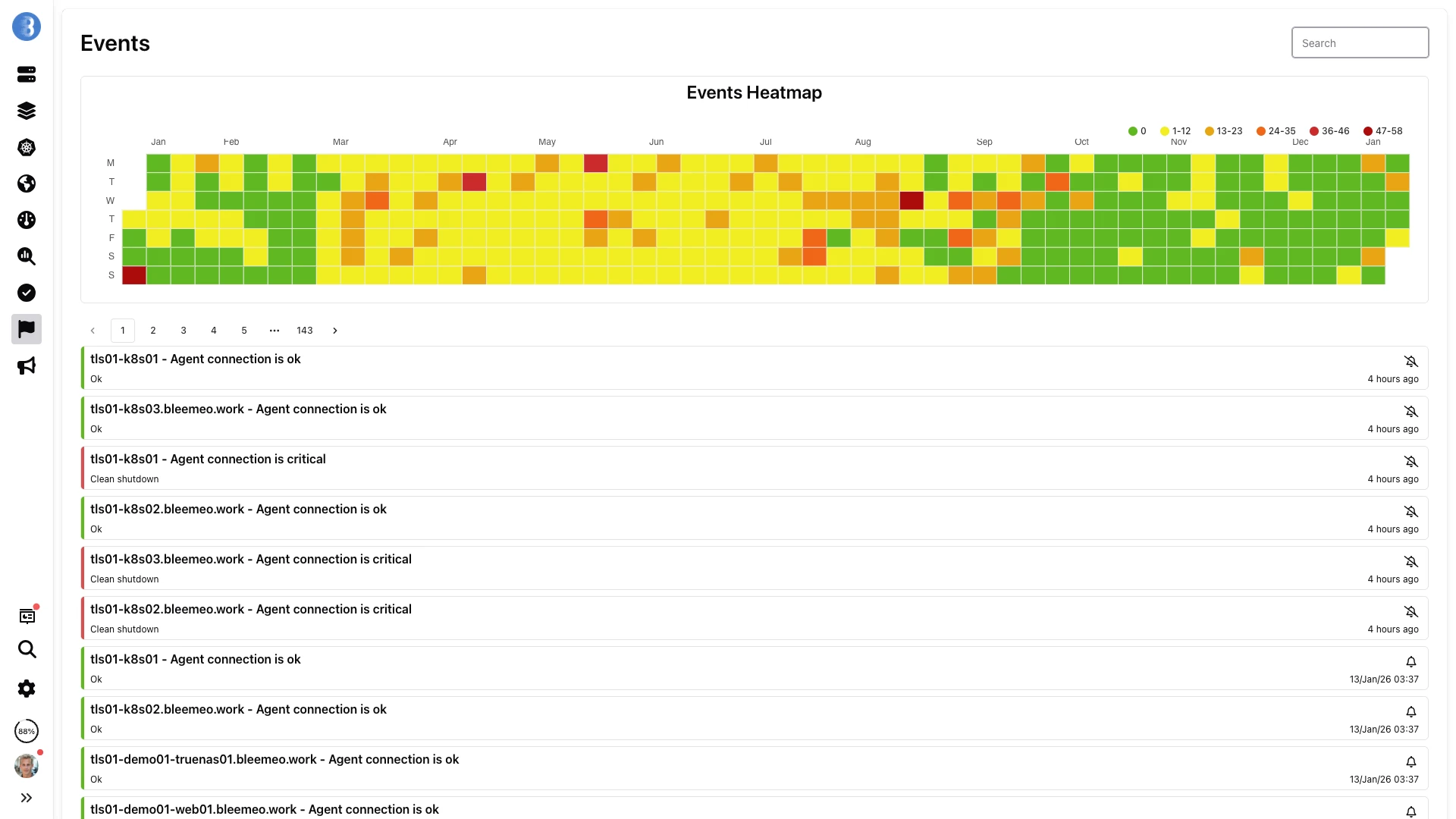

Search & Analysis

In the Bleemeo Cloud panel, you can perform full-text searches across all logs, use regex queries, filter by time range, severity, source, or any structured field. Results are returned in milliseconds even across large volumes. You can save frequently used queries, create log-based alerts, and correlate logs with infrastructure metrics in unified dashboards.

OpenTelemetry Foundation

Glouton embeds an OpenTelemetry Collector at its core. Enable the log pipeline with a single configuration line: log.opentelemetry.enable: true. Once enabled, the agent uses its built-in auto-discovery to detect application log files, container stdout/stderr, and service-specific log paths. The OTel-based architecture means you can also send logs directly from your applications using any OpenTelemetry SDK — the agent accepts OTLP over both gRPC (port 4317) and HTTP (port 4318).

Receivers & Inputs

The agent supports multiple log receivers simultaneously. File receiver watches any path or glob pattern (e.g., /var/log/nginx/*.log) with automatic rotation handling. OTLP receiver accepts structured logs from applications via gRPC or HTTP. For legacy systems, Fluent Bit can forward logs to Glouton's OTLP endpoint. In Docker environments, container labels like bleemeo.log.enable and bleemeo.log.format control per-container log collection behavior without modifying application code.

Pattern-Based Metric Generation

Define regex patterns in Glouton's configuration to transform log lines into countable metrics. For example, count lines matching level=ERROR to create an error-rate metric, or extract HTTP status codes from access logs to track 4xx and 5xx rates over time. These log-derived metrics appear in dashboards alongside infrastructure metrics, bridging the observability gap between logs and monitoring without requiring application changes.

Kubernetes Log Integration

When deployed as a DaemonSet, Glouton captures stdout/stderr from all pods automatically. Pod annotations give you fine-grained control: glouton.log_enable: "false" excludes noisy pods, glouton.log_format specifies the parser (e.g., json, nginx_access), and glouton.log_filter applies severity or regex filters per pod. Namespace-level defaults can be set in the Glouton ConfigMap, allowing teams to define log policies for their namespaces independently.

Supported Log Sources in Detail

File-Based Logs

Monitor any log file by path or glob pattern. Glouton tails files efficiently, tracking file rotation and truncation automatically. Supports multi-line log entries for stack traces and exception blocks. Ideal for application logs, web server access/error logs, and custom application output files.

Container Logs

Automatically collect stdout/stderr from all Docker and Kubernetes containers. In Kubernetes, use pod annotations (glouton.log_enable, glouton.log_format, glouton.log_filter) to control per-pod behavior. Container name, image, and labels are attached as metadata for filtering in the dashboard.

Syslog

Receive logs via syslog protocol (RFC3164 and RFC5424) over UDP or TCP. Configure your network devices, firewalls, and legacy systems to forward syslog to Glouton. Facility and severity are parsed automatically. This is the standard method for collecting logs from infrastructure that does not support modern log protocols.

OTLP (OpenTelemetry)

Send logs directly from your applications using the OpenTelemetry SDK via OTLP over gRPC or HTTP. This gives you full control over log structure, severity, and attributes at the application level. OTLP is the modern standard for telemetry data and integrates seamlessly with Bleemeo's OpenTelemetry-native pipeline.

Use Cases

Application Debugging

Search across all application instances to find the exact request that triggered an error. Correlate application logs with database query logs and infrastructure metrics to identify whether the root cause is in the code, the data layer, or the underlying server.

Security Auditing

Centralize authentication logs, access logs, and system audit trails. Detect brute-force login attempts, unauthorized access patterns, and suspicious privilege escalations across your infrastructure. Retain logs for forensic analysis after security incidents.

Compliance & Retention

Meet GDPR, HIPAA, SOC 2, and PCI-DSS log retention requirements with configurable retention policies. Centralized, immutable storage ensures logs cannot be tampered with or accidentally deleted on individual hosts.

Metrics from Logs

Generate custom metrics from log patterns — count errors per second, track specific event rates, or measure request latencies from access logs. These log-derived metrics appear in dashboards alongside infrastructure metrics, bridging the gap between logs and monitoring.

Kubernetes Troubleshooting

Collect logs from all pods automatically when Glouton is deployed via Helm. Correlate pod logs with container restart events, resource pressure, and node conditions. Quickly identify the log message that preceded a CrashLoopBackOff or OOMKilled event.

Performance Analysis

Analyze access logs to identify slow endpoints, high-error-rate pages, and traffic patterns. Combine web server logs with application and database logs to trace performance bottlenecks from the frontend all the way to the query layer.

Log Management Best Practices

Use Structured Logging

Emit logs in JSON or other structured formats instead of plain text. Structured logs are easier to parse, filter, and query. Fields like timestamp, severity, service name, request ID, and user ID become first-class citizens that can be indexed and searched independently, turning log analysis from string matching into database-like querying.

Set Consistent Severity Levels

Adopt a consistent severity scale across all services (DEBUG, INFO, WARN, ERROR, FATAL). An "error" in one service should mean the same thing as in another. This consistency enables cross-service filtering — show me all ERROR and FATAL logs from the last hour across every service — and prevents important messages from being buried in noise.

Filter Noise at the Source

Health-check endpoints, load-balancer probes, and debug messages can generate enormous volumes of low-value logs. Use Glouton's filtering capabilities to exclude these at the agent level before they consume bandwidth and storage. This reduces cost while keeping your log search results focused on meaningful events.

Correlate Logs with Metrics

A CPU spike becomes actionable when you can see the log messages that preceded it. Enable both metric collection and log collection on the same agent, and use Bleemeo's unified dashboards to view them side-by-side. This correlation transforms troubleshooting from a guessing game into a directed investigation.

Alert on Log Patterns

Do not wait for someone to search logs manually — configure alerts on critical patterns. Alert when "OutOfMemoryError" appears in Java logs, when "FATAL" severity exceeds N per minute, or when a specific error message appears for the first time. Proactive log-based alerting catches application-level issues that infrastructure metrics alone cannot detect.

Want to go further?

Read the DocumentationFrequently Asked Questions

Everything you need to know about Bleemeo's log management

What log sources can I collect from?

Bleemeo can collect logs from virtually any source. This includes: log files (any file path or pattern), syslog (RFC3164 and RFC5424), container logs (Docker, containerd, Kubernetes pods), application stdout/stderr, and logs sent via OTLP over gRPC or HTTP. Glouton automatically discovers and collects logs from running containers and services when auto-discovery is enabled.

How does Bleemeo parse and structure my logs?

Glouton uses an OpenTelemetry Collector-based log processing pipeline. It supports JSON parsing, regex pattern matching, Grok patterns, and custom parsers using Stanza operators. You can define known log formats (like nginx_access, apache_combined) and apply them to files, containers, or services. The system extracts timestamps, severity levels, and structured fields automatically.

Can I filter or exclude certain logs?

Yes, Bleemeo offers powerful filtering capabilities. You can filter logs using OpenTelemetry Transformation Language expressions, regex patterns on log bodies or attributes, severity levels, and resource attributes. Filters can include or exclude logs based on multiple conditions. You can also disable log collection for specific containers using the glouton.log_enable=false label.

How do I search logs in Bleemeo?

Bleemeo provides a powerful search interface in the cloud panel. You can perform full-text searches across all your logs, use regex queries for complex pattern matching, filter by time range, severity level, source, or any structured field. Search results are returned in milliseconds, even across large volumes of log data. You can also save frequently used queries for quick access.

Can I set up alerts based on log patterns?

Yes, Bleemeo supports log-based alerting. You can create alerts triggered by specific log patterns, error messages, or regex matches. You can also alert on threshold conditions like error rate exceeding a certain number per minute. Alerts are processed in real-time, so you're notified within seconds of a critical log event occurring.

How long are my logs retained?

Log retention depends on your plan and can be configured to meet your requirements. Bleemeo supports flexible retention policies with automatic archiving and compression. Hot storage keeps recent logs immediately accessible, while older logs can be moved to cold storage. This allows you to balance search performance with storage costs while maintaining compliance requirements.

Can I correlate logs with metrics?

Yes, this is a core strength of Bleemeo's unified observability approach. You can view logs and metrics side-by-side in dashboards, jump from a metric anomaly directly to related logs, and see the full context of an issue in one place. This correlation enables faster troubleshooting by providing infrastructure metrics alongside application logs.

How do I collect logs from Kubernetes?

When Glouton is deployed in Kubernetes (via Helm chart), it automatically discovers and collects logs from all pods. You can control log collection per pod using the glouton.log_enable annotation. Custom log formats and filters can be applied using pod annotations (glouton.log_format, glouton.log_filter) or via Glouton configuration. Container stdout/stderr logs are captured without any additional configuration.

What is the performance impact of log collection?

Glouton's log processing is designed to be lightweight and efficient. It uses a streaming architecture that processes logs incrementally without loading entire files into memory. Log transmission is batched and compressed to minimize network overhead. For very high-volume scenarios, you can use filters to reduce the volume of logs sent to the cloud while keeping local processing minimal.

Can I generate metrics from my logs?

Yes, Bleemeo can create metrics from log patterns. By defining regex patterns in your configuration, Glouton counts matching log lines and exposes them as metrics (e.g., errors per second, specific event rates). This allows you to alert on log-derived metrics, track trends over time, and visualize log patterns in dashboards alongside your infrastructure metrics.

Start Managing Your Logs Today

Set up centralized logging in minutes. No complex configuration required.

Start Free Trial