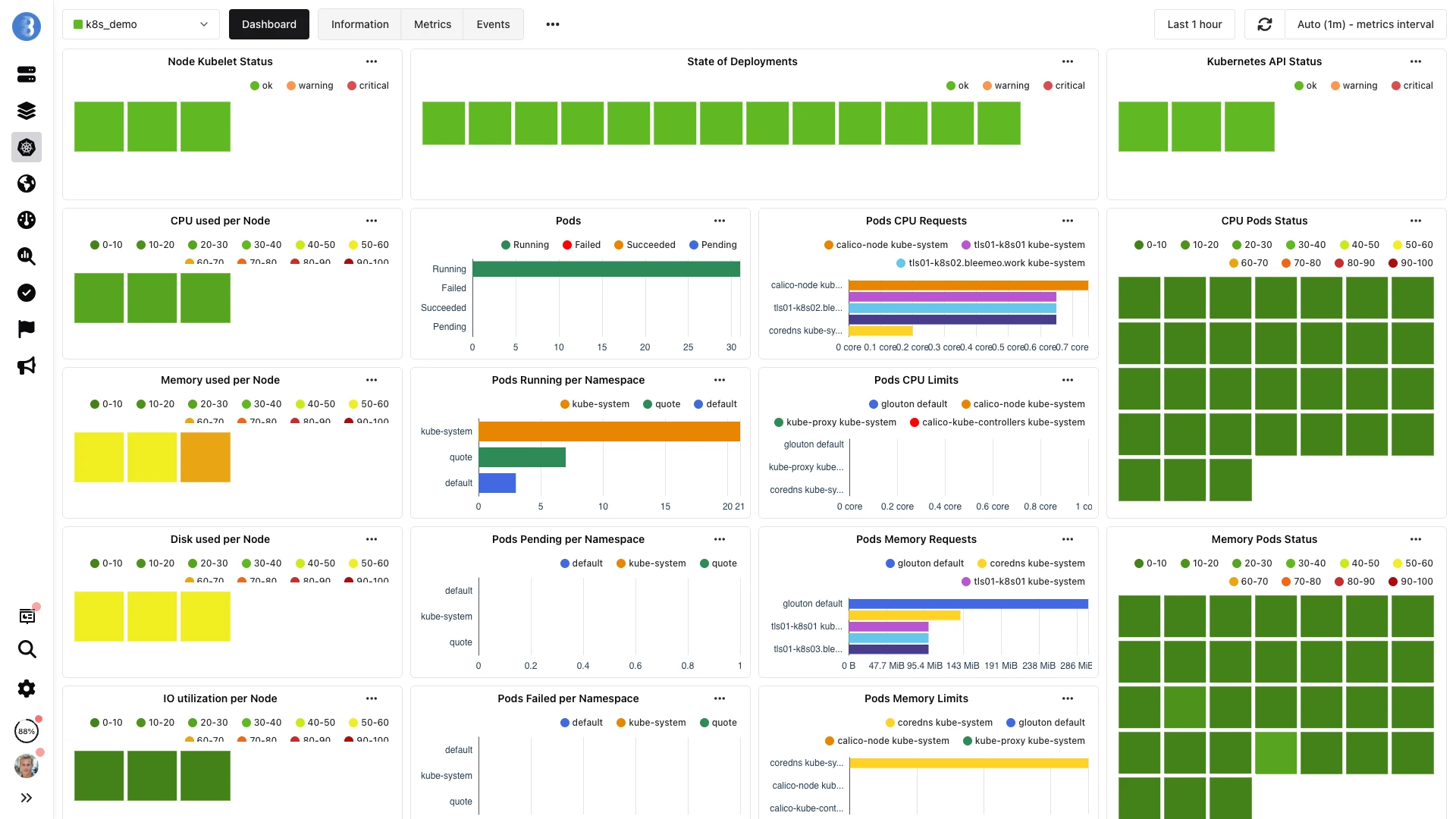

Kubernetes Monitoring

Complete observability for your Kubernetes clusters. Monitor nodes, pods, containers, and services with automatic discovery, Prometheus compatibility, and intelligent alerting.

Full-Stack Kubernetes Observability

From cluster health to individual container metrics, get complete visibility into your Kubernetes environment.

Cluster Level

Control plane health, API server latency, etcd performance, scheduler metrics

Node Level

CPU, memory, disk, network, kubelet status, node conditions

Pod Level

Pod lifecycle, restart counts, resource requests vs limits, readiness

Container Level

CPU throttling, memory usage, OOM events, container states

Networking

Service endpoints, ingress traffic, DNS resolution, network policies

Storage

PersistentVolume usage, claim status, storage class capacity, mount health

What We Monitor

Control Plane

Monitor the heart of your Kubernetes cluster for reliability and performance.

- API Server request latency

- etcd health and latency

- Scheduler queue depth

- Controller manager metrics

- Certificate expiration

Nodes & Kubelet

Track node health and kubelet performance across your cluster.

- Node CPU, memory, disk

- Kubelet health status

- Node conditions (Ready, DiskPressure, etc.)

- Pod capacity and allocation

- Container runtime metrics

Pods & Containers

Deep visibility into workload performance and resource consumption.

- CPU usage and throttling

- Memory usage and OOM kills

- Restart counts and crash loops

- Resource requests vs limits

- Container states and events

Services & Networking

Monitor service endpoints and network connectivity.

- Service endpoint health

- Ingress traffic and latency

- Network policies effectiveness

- DNS resolution times

- Service mesh metrics (Istio, Linkerd)

Workload Resources

Track Deployments, StatefulSets, DaemonSets, and Jobs.

- Deployment replica status

- Rolling update progress

- StatefulSet ordering

- DaemonSet coverage

- Job and CronJob completion

Persistent Storage

Monitor PersistentVolumes and storage performance.

- PV/PVC binding status

- Storage capacity usage

- I/O throughput and latency

- StorageClass provisioning

- Volume mount errors

Kubernetes-Native Features

🔍 Auto-Discovery

Automatically discover and monitor pods, services, and endpoints. No manual configuration needed as workloads scale.

📊 Prometheus Compatible

Native PromQL support. Scrape existing Prometheus endpoints. Use your existing recording rules and alerts.

🏷️ Label-Aware

Filter and aggregate by Kubernetes labels and annotations. Group metrics by namespace, deployment, or custom labels.

📈 Resource Optimization

Right-size resource requests and limits based on actual usage. Identify over-provisioned and under-provisioned workloads.

🔔 Smart Alerting

Pre-configured alerts for common K8s issues: CrashLoopBackOff, pending pods, node NotReady, certificate expiry.

🌐 Multi-Cluster

Monitor multiple Kubernetes clusters from a single dashboard. Compare performance across environments.

📦 Helm Deployment

Deploy Bleemeo agent with a single Helm chart. GitOps-ready with full customization options.

🔗 OpenTelemetry

Ingest metrics and logs via OpenTelemetry. Correlate infrastructure metrics with application data.

Quick Setup with Helm

Add Bleemeo Helm Repository

Add the official Bleemeo Helm chart repository to your Helm installation.

helm repo add bleemeo-agent https://packages.bleemeo.com/bleemeo-agent/helm-charts

helm repo updateInstall the Agent

Deploy Glouton agent as a DaemonSet with your account credentials.

helm upgrade --install glouton bleemeo-agent/glouton \

--set account_id="your_account_id" \

--set registration_key="your_registration_key" \

--set config.kubernetes.clustername="my_k8s_cluster_name" \

--set namespace="default"View Your Cluster

Nodes, pods, and services appear automatically in your Bleemeo dashboard within seconds.

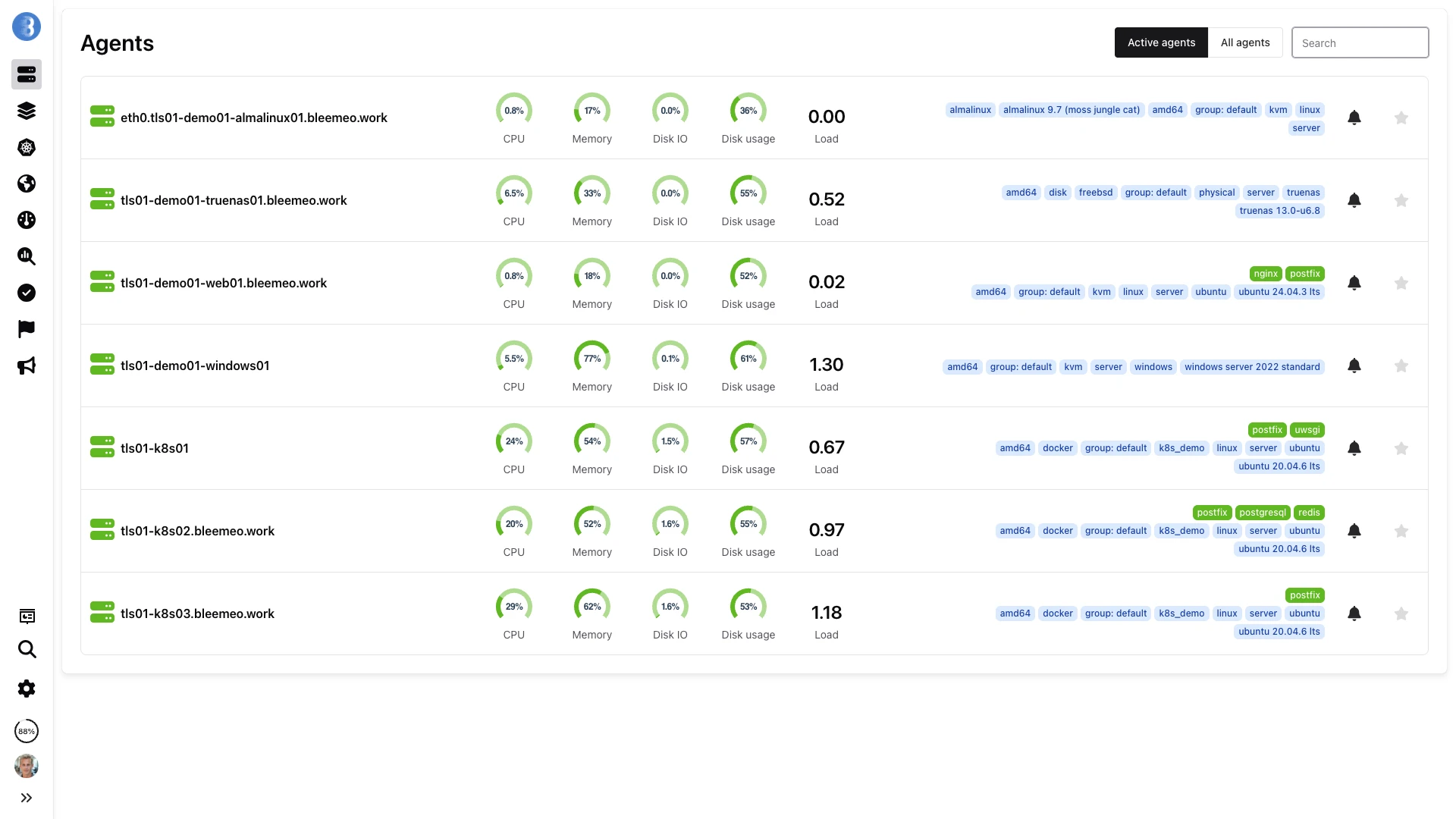

DaemonSet Deployment

Glouton deploys as a DaemonSet, automatically placing one agent pod on every node in your cluster — including nodes added by autoscalers.

- One agent per node, always

- Tolerations for all node types

- Autoscaler-aware coverage

- GitOps-ready Helm chart

DaemonSet Agent Architecture

One Glouton pod per node ensures complete cluster coverage, from control plane health to individual container metrics.

DaemonSet Deployment Model

Glouton deploys as a DaemonSet via Helm, placing exactly one agent pod on every node in your cluster. The Helm chart includes tolerations for all standard node types — GPU nodes, system nodes, and autoscaler-managed nodes all get an agent automatically. Only three environment variables are required: GLOUTON_ACCOUNT_ID, GLOUTON_REGISTRATION_KEY, and GLOUTON_KUBERNETES_CLUSTERNAME. The agent pod requests minimal resources (less than 100 MB memory) and will not compete with your production workloads.

Pod Annotations for Fine-Grained Control

Kubernetes annotations on your pods control how Glouton interacts with each workload. Set glouton.enable: "false" to exclude a pod from monitoring entirely. Use glouton.check.ignore.port.* to skip health checks on specific ports (useful for sidecar containers or debug ports). Add standard Prometheus annotations (prometheus.io/scrape: "true", prometheus.io/port, prometheus.io/path) to expose application-specific metrics that Glouton will scrape and forward to Bleemeo Cloud alongside infrastructure metrics.

Comprehensive Kubernetes Metrics

Beyond basic pod and node counts, Glouton collects deep Kubernetes metrics: pod counts by state (Running, Pending, Failed, Succeeded), per-container restart counts, CPU and memory usage compared against requests and limits, node and namespace counts, certificate expiration dates for CA and node certificates, and API server plus kubelet health indicators. All metrics are labeled with namespace, owner kind (Deployment, DaemonSet, StatefulSet), and owner name for powerful filtering and aggregation in dashboards.

ConfigMap Customization

Override Glouton defaults per cluster using a Kubernetes ConfigMap. Exclude entire namespaces from monitoring (e.g., kube-system or CI runner namespaces), adjust metric scrape intervals, add custom labels to all metrics from a specific cluster, or configure additional Prometheus scrape targets. The ConfigMap approach integrates naturally with GitOps workflows — store your monitoring configuration alongside your application manifests and let ArgoCD or Flux manage it declaratively.

Pre-Built Kubernetes Alerts

Get notified about common Kubernetes issues before they impact your users.

Pod Issues

- CrashLoopBackOff detected

- Pod stuck in Pending

- High restart count

- OOMKilled containers

Node Issues

- Node NotReady

- High CPU/memory pressure

- Disk space low

- Too many pods scheduled

Cluster Issues

- API server errors

- etcd latency high

- Certificate expiring

- PVC pending

Workload Issues

- Deployment replicas unavailable

- StatefulSet not ready

- Job failed

- HPA at max replicas

Works With Your Stack

Why Bleemeo for Kubernetes?

Real-Time Visibility

See pod creation, scaling events, and failures as they happen. No delay in metrics collection.

Cost Optimization

Identify resource waste and right-size your workloads. Reduce cloud spending without impacting performance.

Lightweight Agent

Glouton uses minimal resources. Less than 100MB memory per node. Won't compete with your workloads.

13 months Retention

Keep historical data for capacity planning and trend analysis. Compare performance over time.

What Is Kubernetes Monitoring?

Kubernetes monitoring is the practice of collecting, analyzing, and alerting on metrics from every layer of a Kubernetes environment — from the cluster control plane down to individual container processes. Unlike traditional server monitoring, Kubernetes introduces unique challenges: workloads are ephemeral, pods are created and destroyed constantly, and a single application may span dozens of replicas across multiple nodes.

Effective Kubernetes monitoring requires visibility at four distinct layers. The cluster layer tracks control plane health, API server latency, etcd performance, and certificate expiration. The node layer monitors CPU, memory, disk, and kubelet status on each worker node. The workload layer tracks Deployment replicas, StatefulSet ordering, DaemonSet coverage, and Job completion. Finally, the pod and container layer provides resource usage, restart counts, OOM events, and CPU throttling per container.

Without multi-layer monitoring, Kubernetes operators are forced to use kubectl commands and manual log inspection to diagnose issues — a reactive approach that does not scale. A proper monitoring solution like Bleemeo collects metrics from all four layers automatically via DaemonSet deployment, correlates data across layers, and provides pre-built alerts for common failure modes like CrashLoopBackOff, pending pods, and certificate expiration.

Detailed Kubernetes Metrics

Pod Metrics

Track pod counts by state (Running, Pending, Failed, Succeeded), restart counts per container, CPU and memory usage versus requests and limits, and pod age. Labels include namespace, owner kind (Deployment, DaemonSet, StatefulSet), and owner name for easy aggregation and filtering.

Resource Requests vs Limits

Compare what pods requested (CPU and memory requests) with what they actually consume. Identify over-provisioned workloads wasting resources and under-provisioned ones at risk of CPU throttling or OOMKill. This data is essential for right-sizing resource definitions in your deployment manifests.

Cluster Health

Monitor total node count, Ready vs NotReady nodes, namespace count, and overall cluster status. Track API server availability, etcd latency, and scheduler queue depth. These metrics help you assess the overall health and capacity of your Kubernetes infrastructure.

Certificate Expiration

Track the expiration dates of CA certificates and node certificates used for Kubernetes internal communication. Get alerted before certificates expire — a common cause of sudden cluster failures that is entirely preventable with automated monitoring.

Kubelet & Node Conditions

Monitor kubelet health status on each node, node conditions (Ready, DiskPressure, MemoryPressure, PIDPressure), and container runtime health. Detect nodes that are degraded before they start evicting pods or become NotReady.

Network & Ingress

Track per-pod network receive and transmit bytes, dropped packets, and error counts. Monitor Ingress controller request rates, response latencies, and HTTP error ratios. Correlate network metrics with pod restarts or service degradation to identify connectivity issues, DNS resolution failures, or misconfigured network policies.

Use Cases

Troubleshooting Pod Failures

When a pod enters CrashLoopBackOff, you need to know why immediately. Bleemeo shows the restart count, the last exit code, container logs, and correlated node-level metrics. Determine whether the crash is caused by application errors, OOM kills, or underlying node resource pressure — all from a single dashboard.

Right-Sizing Workloads

Over-provisioned resource requests waste cluster capacity and increase cloud costs. Under-provisioned requests cause throttling and OOM kills. Use Bleemeo's resource request vs actual usage metrics over time to identify the optimal CPU and memory requests for each workload, reducing waste while preventing resource contention.

Capacity Planning

Track cluster resource utilization trends over weeks and months. Identify when nodes are approaching capacity limits and plan scaling events before pods start pending due to insufficient resources. Use 13 months of historical data to forecast seasonal patterns and budget for infrastructure growth.

Multi-Cluster Management

Monitor development, staging, and production clusters from a single dashboard. Compare resource utilization across environments, detect configuration drift between clusters, and ensure that staging clusters mirror production sizing. Each cluster is identified by its configured name for easy filtering.

GitOps Deployment Validation

After a Flux or ArgoCD deployment, monitor the rollout in real time. Track new pod creation, old pod termination, and replica availability during rolling updates. Detect failed deployments (stuck rollouts, crash loops in new versions) and correlate deployment timing with metric changes to validate that releases perform as expected.

Cost Optimization & Chargeback

Analyze resource consumption per namespace to allocate infrastructure costs to teams or projects. Identify namespaces with consistently low CPU and memory utilization that are over-provisioned. Use historical usage data to right-size cluster node pools, switch to spot or preemptible instances for tolerant workloads, and reduce overall Kubernetes infrastructure spending.

Kubernetes Monitoring Best Practices

Deploy as a DaemonSet

Run the monitoring agent as a DaemonSet so every node automatically gets an agent pod — including nodes added by autoscalers. This guarantees complete cluster coverage without manual intervention. Bleemeo's Helm chart handles this by default, including proper tolerations and resource limits.

Use Prometheus Annotations for Custom Metrics

Add prometheus.io/scrape: "true" to your pod annotations to expose application-specific metrics via the Prometheus format. Bleemeo's agent discovers these endpoints automatically and sends the metrics to the cloud. This is the standard Kubernetes-native approach for custom application metrics without requiring additional configuration.

Always Set Resource Requests and Limits

Pods without resource requests cannot be right-sized because there is no baseline to compare against. Always set CPU and memory requests in your deployment manifests. Bleemeo then compares actual usage to requested resources, enabling data-driven right-sizing decisions that reduce waste and prevent resource contention.

Monitor Certificate Expiration

Kubernetes uses TLS certificates for internal communication between the API server, kubelet, and etcd. Expired certificates cause sudden, total cluster failure. Bleemeo tracks certificate expiration dates and alerts you before they expire, giving you time to rotate certificates proactively instead of discovering the problem during an outage.

Correlate Metrics with Container Logs

A pod restart count increasing tells you something is wrong. The container logs tell you exactly what. Enable log collection alongside metrics for the fastest root cause analysis. Bleemeo's agent collects both from the same DaemonSet, and the cloud platform displays them together, linked by pod name and timestamp.

Want to go further?

Read the DocumentationFrequently Asked Questions

Everything you need to know about Bleemeo's Kubernetes monitoring

How do I deploy Bleemeo in my Kubernetes cluster?

Bleemeo deploys via Helm chart as a DaemonSet, placing one Glouton agent on each node. Simply add the Bleemeo Helm repository, then run helm upgrade --install with your account credentials and cluster name. The agent automatically discovers all pods and services. You can also deploy using plain kubectl with our provided manifests. GitOps tools like ArgoCD and Flux are fully supported.

What Kubernetes metrics does Bleemeo collect?

Bleemeo collects comprehensive metrics including: Pod metrics (counts by state, restart counts, CPU/memory usage vs requests/limits), Node metrics (CPU, memory, disk, network, kubelet status), Cluster metrics (node count, namespace count, API status), and Certificate expiration (CA and node certificates). Metrics are labeled by namespace, owner kind (Deployment, DaemonSet), and owner name for easy filtering.

Does Bleemeo auto-discover services in my pods?

Yes, automatic service discovery is a core feature. The Bleemeo agent detects all running services in your pods (databases, web servers, message queues, etc.) and starts monitoring them without manual configuration. It recognizes 100+ services out of the box. As pods scale up or down, monitoring automatically follows - no reconfiguration needed for ephemeral workloads.

Can I scrape Prometheus metrics from my applications?

Yes, Bleemeo supports Prometheus-style scraping via pod annotations. Add prometheus.io/scrape: "true" to your pods, and optionally specify prometheus.io/path and prometheus.io/port for custom metrics endpoints. The agent automatically discovers and scrapes these endpoints. You can also use PromQL to query metrics in your dashboards.

What are the resource requirements for the agent?

The Glouton agent is designed to be lightweight. It typically uses less than 100MB of memory and minimal CPU per node. The agent won't compete with your production workloads for resources. Resource requests and limits can be customized in the Helm values if needed. The agent is optimized for high-density environments with many pods per node.

Which Kubernetes distributions are supported?

Bleemeo works with all major Kubernetes distributions: Managed services (EKS, GKE, AKS, DigitalOcean Kubernetes), Self-managed (kubeadm, k3s, k0s, microk8s), and Enterprise distributions (OpenShift, Rancher, Tanzu). We support Kubernetes 1.19+. The agent adapts to different container runtimes including containerd, CRI-O, and Docker.

Can I monitor multiple Kubernetes clusters?

Yes, Bleemeo supports multi-cluster monitoring. Each cluster appears as a separate entity in your dashboard with its own name (configured via config.kubernetes.clustername). You can view all clusters in a unified dashboard, compare metrics across clusters, and drill down into individual cluster details. This is ideal for managing development, staging, and production environments.

What alerts are pre-configured for Kubernetes?

Bleemeo includes pre-built alerts for common Kubernetes issues: Pod issues (CrashLoopBackOff, pending pods, high restart counts, OOMKilled), Node issues (NotReady, disk/memory pressure), Cluster issues (API server errors, certificate expiring), and Workload issues (deployment replicas unavailable, failed jobs). You can customize thresholds or create additional alerts.

How do I track resource requests vs actual usage?

Bleemeo collects both resource requests/limits and actual usage for CPU and memory. Dashboards show the comparison between what pods requested and what they're actually using, helping you identify over-provisioned workloads (wasting resources) and under-provisioned ones (at risk of throttling or OOM). This enables effective right-sizing of your workloads.

Does Bleemeo monitor container logs?

Yes, with log collection enabled, Glouton automatically captures logs from all containers in your Kubernetes cluster. Logs are collected from container stdout/stderr without additional configuration. You can apply custom parsers and filters using pod annotations (glouton.log_format, glouton.log_filter). Logs can be correlated with metrics for comprehensive troubleshooting.

Start Monitoring Your Kubernetes Clusters

Deploy in minutes. Get full visibility into your K8s infrastructure.