Intelligent Alerting & Notifications

Get notified instantly when issues arise. Smart alert routing, multiple notification channels, and ML-powered anomaly detection ensure you're always informed without alert fatigue.

How Bleemeo Alerting Works

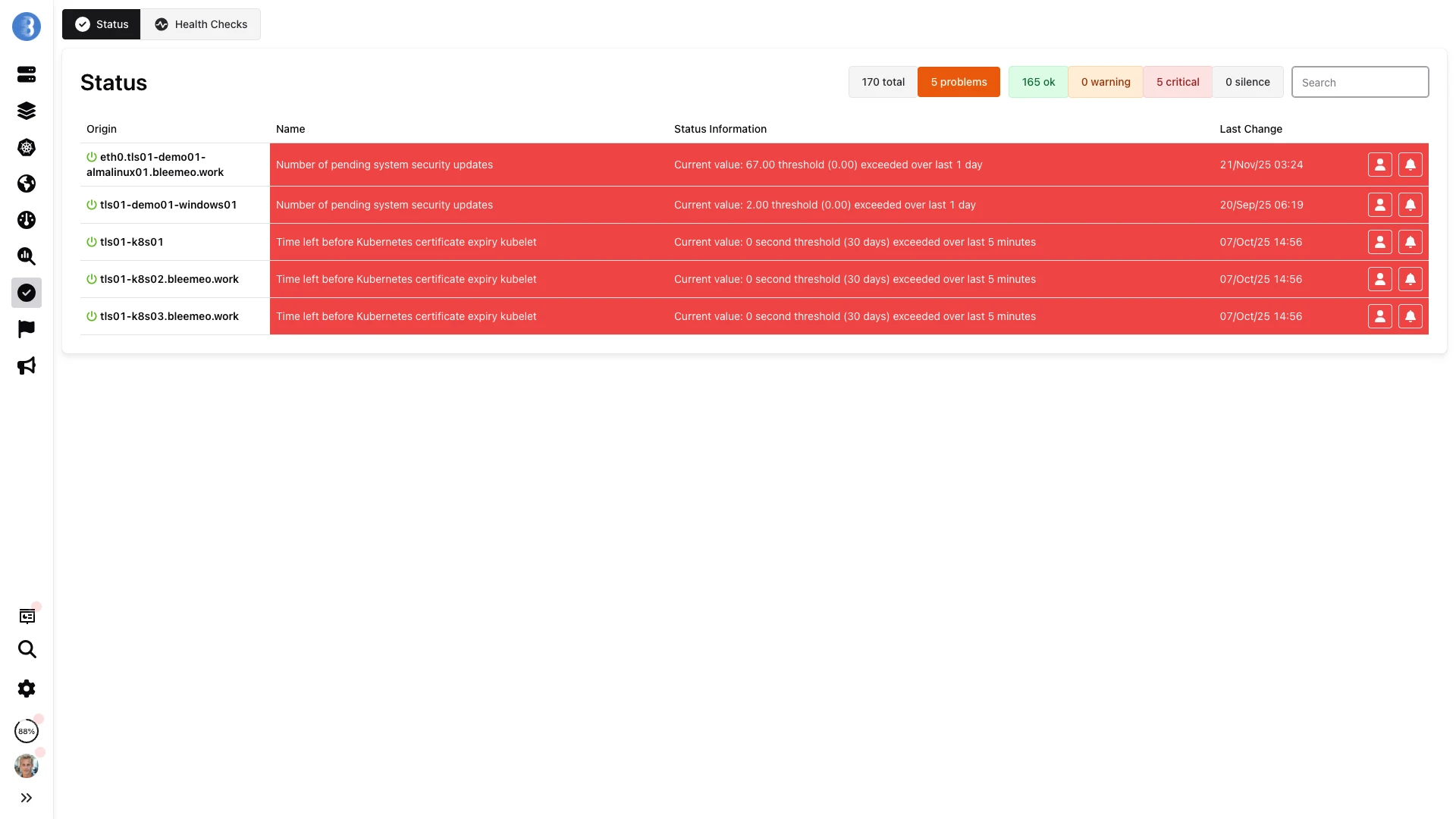

Bleemeo alerting follows a streamlined pipeline designed to catch real problems fast while filtering out transient noise. The flow is straightforward: Detection of a metric anomaly, Event Creation in the Bleemeo Cloud, Notification sent through your configured channels, and Resolution tracked automatically when the issue clears. Every step is logged so you can review exactly what happened and when.

At the heart of the detection layer sits the Glouton agent, Bleemeo's lightweight open-source collector. Glouton samples your infrastructure metrics at 10-second resolution, giving you near-real-time visibility into CPU usage, memory consumption, disk utilization, network throughput, and hundreds of other indicators. When a metric crosses a configured threshold, an event is created in the Bleemeo Cloud platform and the alert evaluation pipeline kicks in.

One of the most appreciated aspects of Bleemeo is its pre-configured thresholds. Out of the box, sensible defaults activate automatically for the most common infrastructure metrics including CPU load, memory pressure, disk space, network errors, and disk I/O latency. There is no manual setup required for standard monitoring scenarios. You connect a server, install the agent, and alerting begins working immediately.

To avoid transient spikes triggering false alerts, Bleemeo uses a soft-status grace period. By default, a metric must remain in a problematic state for 5 minutes (300 seconds) before the alert transitions from soft to hard status and a notification is dispatched. This prevents a brief CPU spike during a deployment or a momentary network hiccup from waking up your on-call engineer at 3 AM.

Every metric supports two severity levels: Warning and Critical. Warning thresholds signal that a resource is approaching a dangerous zone, while Critical thresholds indicate an immediate problem that demands attention. Both levels are fully customizable per server group, allowing you to apply stricter limits to production servers while keeping more relaxed policies for development environments.

For advanced use cases, recording rules let you create derived metrics using PromQL expressions. You can aggregate, transform, or combine raw metrics and then alert on the computed result. For example, you might define a recording rule that calculates the total CPU usage across all Cassandra containers in a cluster and trigger a critical alert when that aggregate value exceeds a capacity threshold. This approach gives you the expressiveness of Prometheus alerting within the managed Bleemeo platform.

Alerting Features

Email Alerts

Instant email notifications arrive with rich HTML formatting, embedded metric graphs showing the exact moment thresholds were crossed, and direct links to the relevant dashboard. Related alerts are grouped into threads so your inbox stays organized, and you can configure multiple recipients per rule to ensure the right people are always in the loop.

SMS Notifications

Critical alerts via SMS ensure you are notified even when away from your computer. With global carrier coverage, messages reach your team anywhere in the world. SMS can be configured for critical-only severity to reserve this high-priority channel for genuine emergencies, and built-in rate limiting with cost control settings prevents notification floods during major incidents.

Webhook Integration

Send structured JSON payloads containing alert details, metric values, host information, and timestamps to any HTTP endpoint. Out-of-the-box integrations work with Slack, PagerDuty, Microsoft Teams, OpsGenie, VictorOps, and any custom webhook-compatible service. Use webhooks to drive automated remediation workflows or feed alerts into your existing incident management platform.

Mobile Push

Native iOS and Android push notifications put critical alerts directly on your phone lock screen. One tap takes you straight into the relevant metric view within the Bleemeo mobile app. Critical alerts can be configured to override Do Not Disturb mode, ensuring that urgent infrastructure issues are never missed even outside working hours.

ML Anomaly Detection

Machine learning algorithms continuously study your metrics to learn what "normal" looks like for every service and host. Over time, the system builds behavioral baselines and alerts when it detects gradual performance degradation, unusual traffic patterns, or subtle shifts that static thresholds would miss. No manual threshold configuration is needed for anomaly-based alerts.

Alert Routing

Route alerts by service type, severity level, time of day, or custom tags so the right team always gets the right notification. Database alerts go to your DBAs, server alerts go to ops, and application errors go to developers. Each route can use different notification channels and escalation policies, giving you fine-grained control over your incident response workflow.

Notification Channels

- Rich HTML formatting

- Embedded metric graphs

- Multiple recipients

- Thread grouping

- Direct dashboard links

- Configurable severity filters

SMS

- Global coverage

- Critical alerts only

- Rate limiting

- Cost control

- International delivery

- Escalation fallback

Webhooks

- Slack integration

- PagerDuty support

- Microsoft Teams

- Custom endpoints

- OpsGenie

- Custom JSON payloads

Mobile App

- Push notifications

- In-app details

- Quick actions

- Alert history

- iOS & Android

- Critical alert override

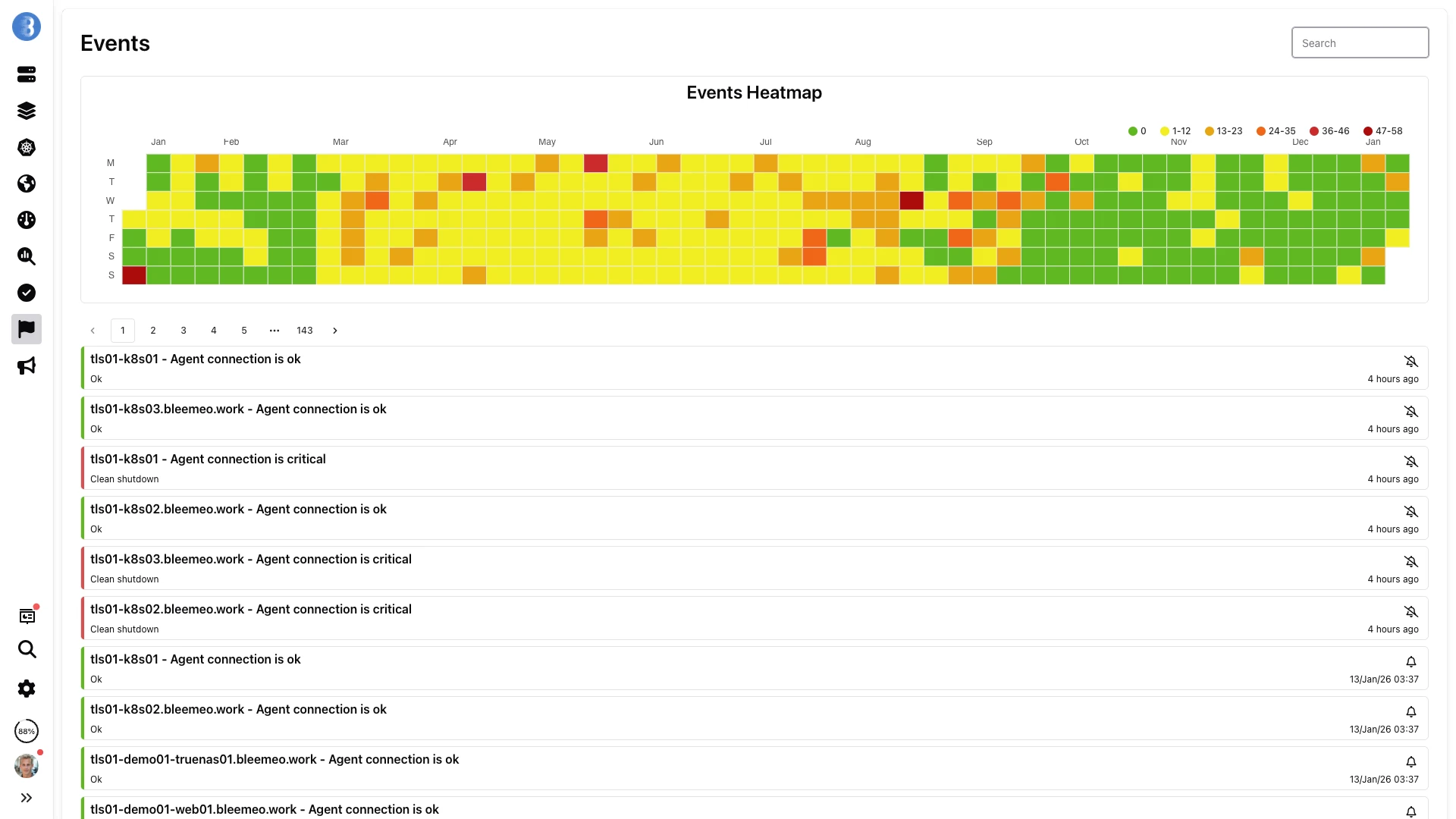

Complete Event History

Track every event in your infrastructure with a comprehensive timeline. See when alerts triggered, what changed, and how issues were resolved. The event history is invaluable for post-incident reviews: filter by service, severity, or time range to reconstruct exactly what happened during an outage. Export event data for compliance reporting or to feed into your incident management workflow.

- Real-time event streaming

- Filterable by service, severity, and time

- Correlation between related events

- Export for post-incident analysis

- Alert acknowledgment tracking

- Mean time to resolution metrics

Smart Alert Management

Alert Grouping

Related alerts originating from the same server or service are automatically consolidated into a single notification, dramatically reducing noise while preserving full context. Instead of receiving fifty individual CPU alerts when a cluster node struggles, you get one grouped notification that summarizes every affected metric and links to the relevant dashboards.

Escalation Policies

Define multi-level escalation workflows that ensure critical issues never fall through the cracks. If a primary on-call engineer does not acknowledge an alert within a configurable time window, the notification automatically escalates to the next level with different contacts and channels. A typical chain might progress from email to SMS to phone call, guaranteeing that urgent problems reach someone who can act.

Maintenance Windows

Specify a time range and the affected hosts or services, and Bleemeo will hold back alert notifications for the duration. Monitoring continues uninterrupted so you still collect data, but your team is not disturbed by expected disruptions. Maintenance windows support recurring schedules for regular patching cycles, deployment windows, or weekly restart routines.

Alert Dependencies

When a parent service goes down, child alerts are automatically suppressed to prevent alert storms from cascading failures. For example, if a network switch becomes unreachable, Bleemeo suppresses the individual host alerts behind that switch because they are all consequences of the same root cause. This keeps your team focused on the actual problem instead of drowning in symptomatic noise.

Flexible Notification Configuration

Three-step setup: define the scope, pick the problem, choose the targets

1. Scope

Choose what to monitor: any server, specific servers, server groups, or tag-based selection. Group servers by environment (production, staging, development) for different alert policies. You can also scope notifications to individual services running on those servers, giving you granular control over which components generate alerts.

2. Problem

Define what triggers a notification: specific metric thresholds, recording rule violations, server connection loss, or service unavailability. Set warning and critical levels independently to distinguish between situations that need attention soon and those that demand immediate action. Combine multiple conditions for sophisticated alerting logic.

3. Targets

Route alerts to the right people: contact groups, individual team members, or external systems via webhooks. Configure time constraints such as business hours only or weekends only, and set repeat delays for persistent issues that remain unresolved. Each target can receive notifications through its preferred channel.

On-Call Scheduling & Contact Groups

Managing who gets notified and when is just as important as the alerts themselves. Bleemeo's contact groups let you organize team members by role or responsibility — a database team, a networking team, a platform team — and route alerts to the right group based on the service or infrastructure involved.

On-call scheduling ensures that critical alerts always reach someone who can act. Define rotation schedules so that on-call responsibilities are shared fairly across your team. When an engineer is on call, they receive alerts through their preferred channels — mobile push notifications during work hours, SMS or phone call escalation after hours.

Silence rules let you temporarily suppress alerts during planned maintenance without disabling monitoring. Schedule silence windows in advance for regular maintenance, or create ad-hoc silences when you need to work on a known issue without alert noise. Monitoring continues normally during silenced periods, so you still have full metric data when the window ends.

Want to go further?

Read the DocumentationFrequently Asked Questions

Everything you need to know about Bleemeo's alerting system

What notification channels are supported?

Bleemeo supports multiple notification channels: Email with rich HTML formatting and embedded metric graphs, SMS for critical alerts with global coverage, Webhooks for integration with Slack, PagerDuty, Microsoft Teams, OpsGenie, and custom endpoints, and Mobile push notifications through the Bleemeo iOS and Android apps. You can configure multiple channels per alert rule.

How do I create alert rules?

Bleemeo provides pre-configured alert rules for common infrastructure issues (high CPU, low disk space, service down, etc.) that activate automatically when you connect servers. For custom alerts, you can define threshold-based rules on any metric with configurable warning and critical levels. Advanced users can use PromQL queries for complex alerting conditions.

What is ML-based anomaly detection?

Bleemeo uses machine learning to automatically detect unusual patterns in your metrics. Rather than requiring static thresholds, the system learns what's "normal" for each metric over time. When behavior deviates significantly from expected patterns, an alert is triggered. This catches issues that would be missed by traditional threshold alerts, like gradual performance degradation or unusual traffic patterns.

Can I route alerts to different teams?

Yes, Bleemeo supports alert routing based on multiple criteria. You can route alerts by service type (database alerts to DBAs, web server alerts to ops), severity level (critical to on-call, warnings to email), time of day (different contacts for business hours vs after-hours), and custom tags. Each route can use different notification channels.

How do I prevent alert fatigue?

Bleemeo includes several features to reduce alert noise: Alert grouping combines related alerts into single notifications, Alert dependencies suppress downstream alerts when root cause issues are detected, Rate limiting prevents notification floods, and Maintenance windows suppress alerts during planned work. These ensure you're notified about real issues without being overwhelmed.

How does alert escalation work?

You can define multi-level escalation policies. If an alert isn't acknowledged within a specified time, it automatically escalates to the next level - perhaps from email to SMS, or from primary on-call to backup. This ensures critical issues don't get lost even if the first responder is unavailable. Each escalation level can have different contacts and channels.

What are maintenance windows?

Maintenance windows let you suppress alerts during planned work. You specify a time range and optionally which hosts or services are affected. Monitoring continues during the window (so you have data), but alerts are held back. This prevents false alarms during deployments, updates, or scheduled maintenance. You can create recurring windows for regular maintenance schedules.

Can I see alert history?

Yes, Bleemeo provides a complete event history showing when alerts triggered, changed state, and resolved. You can filter by service, severity, and time range. This history is valuable for post-incident analysis, understanding recurring issues, and tracking mean time to resolution (MTTR). Events can be exported for reporting or compliance purposes.

Do alerts work with webhooks for custom integrations?

Yes, Bleemeo's webhook integration sends JSON payloads to any HTTP endpoint. The payload includes alert details, metric values, host information, and timestamps. This enables integration with custom ticketing systems, incident management platforms, chat tools, or automation workflows. You can customize which alerts trigger webhooks and to which endpoints.

How fast are alert notifications?

Alert notifications are sent within seconds of an alert condition being detected. Metrics are collected every 10 seconds, and alerts are evaluated continuously. Email and webhook notifications typically arrive within seconds; SMS may take slightly longer depending on carrier. Push notifications to mobile apps are near-instantaneous for users with the app installed.

Never Miss a Critical Issue

Set up intelligent alerting in minutes. No complex configuration required.

Start Free Trial